Mixed Reality for Robotics

Description

When robots operate in shared environments with humans, they are expected to behave predictably, operate safely, and complete the task even with the uncertainty inherent with human interaction.

Preparing such a system for deployment often requires testing the robots in an environment shared with humans in order to resolve any unanticipated robot behaviors or reactions, which could be potentially dangerous to the human.

In the case of a multi-robot system, uncertainty compounds and opportunities for error multiply, increasing the need for exhaustive testing in the shared environment but at the same time increasing the possibility of harm to both the robots and the human.

As the number of components of the system (humans, robots, etc.) increases, controlling and debugging the system becomes more difficult.

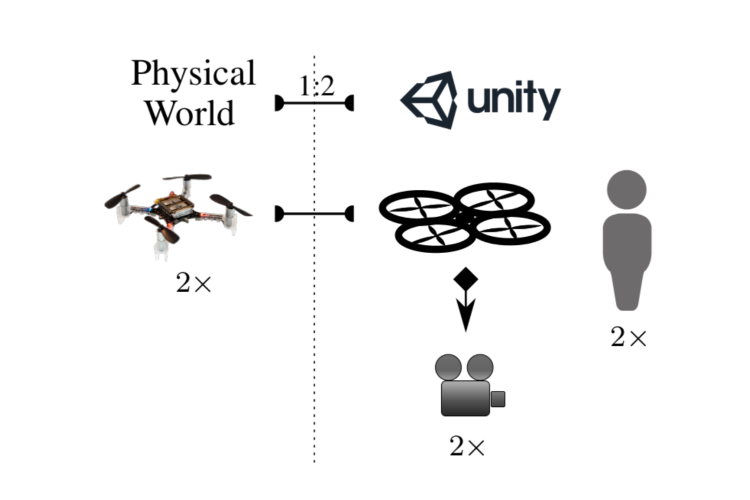

Allowing system components to operate in a combination of physical and virtual environments can provide a safer and simpler way to test these interactions, not only by separating the system components, but also by allowing a gradual transition of the system components into shared physical environments.

Such a Mixed Reality platform is a powerful tool for testing that can address these issues and has been used to varying degrees in robotics and other fields.

We present Mixed Reality as a tool for multi-robot research and discuss the necessary components for effective use.

Furthermore, we present practical applications with different robots (Crazyflie 2 and TurtleBot 2) and simulation platforms (Gazebo, V-REP, and Unity 3D.)

Investigators

In collaboration with the Mixed Reality Laboratory (MxR).

- Wolfgang Hönig

- Christina Milanes

- Lisa Scaria

- Thai Phan (MxR)

- Mark Bolas (MxR)

- Nora Ayanian

Related Publications

-

Thai Phan, W. Hönig, and N. Ayanian.

"Mixed Reality Collaboration between Human-Agent Teams (Extended Abstract)",

in Proc. IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR) (Poster), Reutlingen, Germany, March 2018.

[ PDF Preprint, Video ] -

W. Hönig, C. Milanes, L. Scaria, T. Phan, M. Bolas, and N. Ayanian.

"Mixed Reality for Robotics",

in IEEE/RSJ Intl Conf. on Intelligent Robots and Systems, Hamburg, Germany, September 2015.

[ PDF Preprint, Video, Code, BibTeX ]